There is a growing body of evidence hinting that the conversational AI systems—aka chats—are unreliable, unsecure, and unsafe. The general conclusion, expressed in only one word, would be that AI systems are untrustworthy. It is based on the demonstration that AI hallucinates, produces deceptive behaviors, and self-poisons/self-pollutes its originating model.

Unreliable

Indeed, it is most likely that models trained on past data cannot handle situations where data is outside the training domain. Once a system faces unexpected inputs and finds nothing in the model, it will create false data and produce wrong information.

Unsecure

In conversation with users, the system will agree with the line of thinking of the user via flattery and agreements. Therefore, augmenting the inherent beliefs of users. At the same time, users are training the system —for free—and it is sucking-up data from user sessions. And more than likely spying on users as well.

Unsafe

Caregivers have observed that system use has led to some tragic events. Notably, suicide ideation in youngsters was encouraged. While others fell prey to the addictive power of AI-generated chats. This led several countries to forbid its use for underage persons. Thus, obviously unsafe in real-life situations.

On The Road to Certification

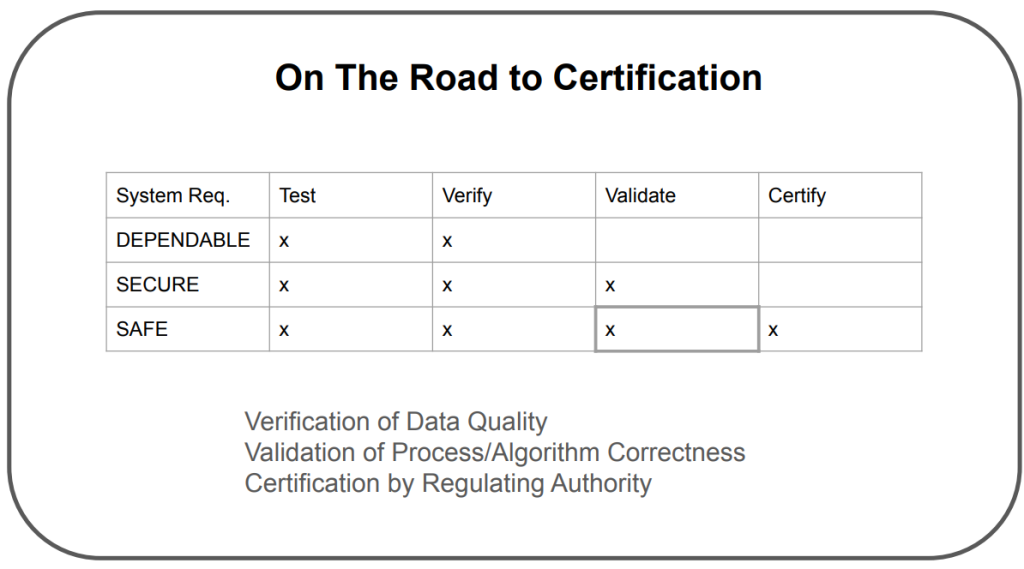

To deal with those three important deficiencies, one can envision the path toward certification consisting of the following three escalating stages expressed via system features and requirements.

Dependable

Firstly, exhaustive, appropriate system testing should detect bugs and errors and prevent execution of failures and misbehaviors.

Secure

Then, once a system exhibits dependable behavior, it should be subjected to security validation, ensuring that the external players and internal agents cannot use security holes to make the system vulnerable.

Safe

Dependable and secure system operation is preconditioned for the safe use in either life-threatening circumstances or situations of big material losses and/or high risks. This will require regulatory scrutiny and clear, crisp certification procedures based on the law. Similar to certification procedures for the transportation industry.

Unpredictable=Untrustworthy?

Overall, one can think that such systems can be (prudently) used in the consumer domain, while not (securely) in corporate environments. After a few episodes of the attacks on private data sets and internal information collections, some large companies have forbidden the use of AI conversational systems internally.

In closing, conversational AI systems are unpredictable and therefore untrustworthy. Users should be aware of that. I think.

A version of this post originally appeared on the author’s personal Substack.

image sources

- Figure: On the Road to Certification: Credit: Kemal A. Delic

- Deep Fake Concept: Credit: wildpixel